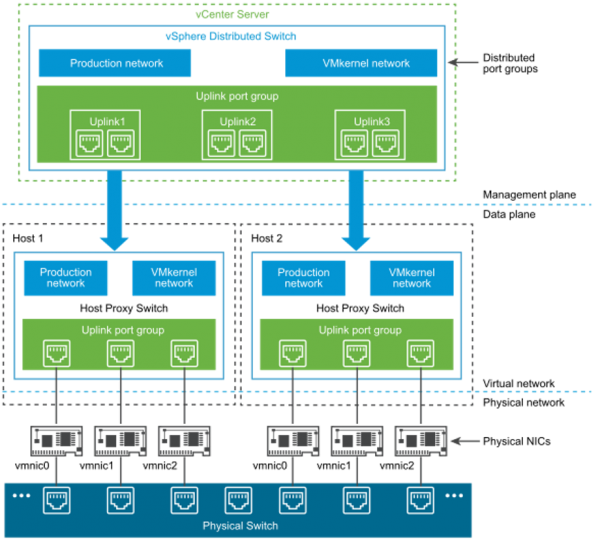

In a recent project, we decided to explore using the Cisco NX-OS 9000v virtual switch as part of our pre-deployment testing infrastructure. The virtual switch functions identical to our actual NX-OS hardware switches, but with the benefit of running purely as a virtual machine (VM) or appliance on top of our cloud infrastructure. That cloud infrastructure is a multi-node vSphere deployment, that uses distributed vSwitches, each having multiple uplink port groups, to provide high availability across the network domains. Figure 1 shows the overall architecture of the distributed switch that is used in the vSphere deployment to connect VMs to the physical network.

Figure 1: vSphere Distributed Switch Architecture [from Reference 1]

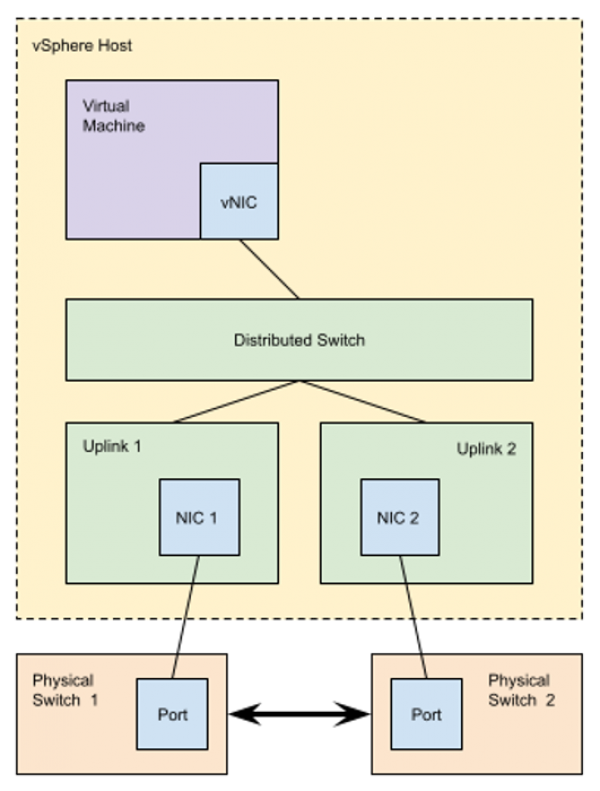

To connect a virtual machine to a “real world” network that is connected to a physical network or physical switches, a Distributed Port Group is created on the Distributed Switch in vSphere. For example, that Distributed Port Group may allow VLAN 35 on the physical network to be connected to the first network interface on the virtual machine, as is shown in Figure 2 below. A packet from the virtual machine is forwarded to the distributed switch running on the vSphere host, which then forwards the packet to the uplink port group, which then forwards the packet out one of the physical uplink ports to the physical network switch. The selection of the uplink port is handled by the NIC Teaming and Load Balancing configuration, where the load balancing operation is performed on traffic flowing from the VM to the physical network. The default policy used in our cluster is “Route Based on Originating Virtual Port,” which selects the upload for the virtual port (i.e. the virtual switch port “connected” to the VM network interface).

Figure 2: NIC Teaming and Port Allocation on a vSphere Distributed Switch [from Reference 1]

![Figure 2: NIC Teaming and Port Allocation on a vSphere Distributed Switch [from Reference 1]](/sites/default/files/blog-images/figure2-blog.png)

Figure 3 shows the approximate interconnections between the physical network and one of the vSphere hosts, showing the multiple uplinks and physical switches that provide the redundancy to the system. The physical switches are interconnected with a high speed fabric that provides the failure redundancy. So, the complicated question is what happens when the VM also contains a bridge, which is the case when running the Cisco NX-OS 9000v virtual switch. Similarly, this configuration would also apply to a simple Linux virtual machine running a bridge, and would be used for running a Docker macvlan network.

Figure 3: vSphere Host Connected to Multiple Physical Switches

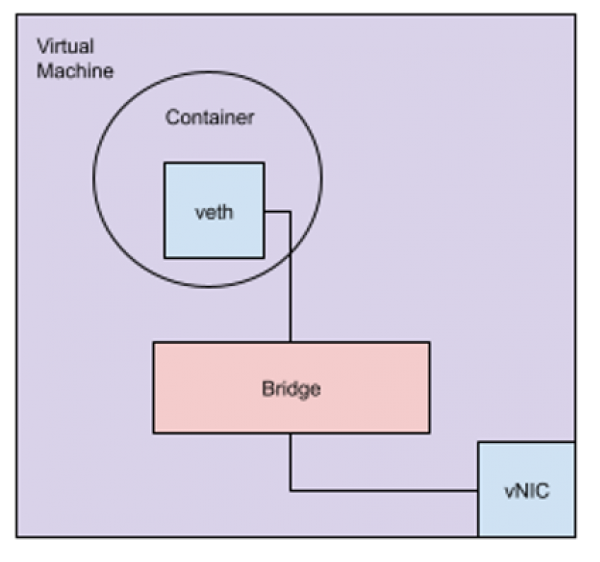

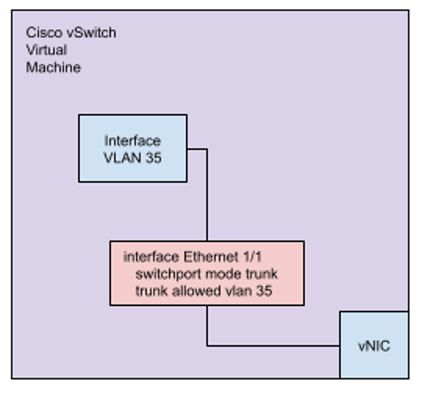

In this case, a packet sourced from an interface within the virtual machine that is connected to the bridge will not have the MAC address assigned to the virtual machines vNIC by vSphere. Figure 4 shows this topology within the virtual machine. The obvious solution is to enable both “Promiscuous Mode” and “Forged Transmits” on the Distributed Port Group, thus allowing the interface connected to the bridge to send and receive packets over the distributed port. It’s important to note, that in the default configuration the VMware Distributed Switch and Distributed Port Groups do not enable MAC learning, and as a result will not learn the MAC address of the veth interface connected to the vNIC, which is connected to the Distributed Port Group. Figure 5 shows the similar configuration, for a simple test of the Cisco vSwitchwhere an IP address is assigned to a VLAN interface within the switch. In that configuration, the MAC address of the VLAN interface is decided by the Cisco NX-OS and will be “unknown” to the vSphere Distributed Port Group.

Figure 4: Linux Virtual Machine with a Bridged Interface

Figure 5: Simple Cisco vSwitch Configuration within a Virtual Machine

Now let’s trace what happens when our container, or process on the other side of the bridge in the virtual machine, sends a packet to another virtual machine or physical host. The packet “moves” over the bridge in the virtual machine, which “knows” via MAC learning, to forward the packet out of the vNIC interface of the virtual machine. The packet is received by the Distributed Port Group, which will forward the packet out the assigned uplink to the physical switch, which will forward the packet to appropriate ports, as the physical switch likely has MAC learning enabled. If that forwarding process results in the packet being forwarded to the second physical NIC (i.e. the redundant link), the virtual switch will forward the packet back to our original virtual machine. Why, because that virtual machine set up the vNIC as “Promiscuous Mode.” In this case, the virtual machine, the bridge, and the veth interface will see the duplicate packet. This has a number of “interesting” effects. First, if that bridge is running spanning tree or similar loop protection protocols, it will put the vNIC port into the blocking state, so no traffic can be sent or received from the veth. Second, if the bridge has MAC learning enabled, the MAC address of the veth interface is “moved” from the bridge port connected to the veth interface to the bridge port connected to the vNIC. After all, a packet with that source MAC address just arrived on that bridge port. If spanning tree and MAC learning are both disabled, the duplicate packet may be ignored or responded to. In the case of a ping echo request, the IP stack on the veth interface will likely respond, showing up as a duplicate echo response. This issue is also described in Reference 2, which is what I used to track down the solution, documented in Reference 3.

Fortunately, there is a solution. Per Reference 3, beginning with vSphere Distributed Switch version 6.6, MAC learning can now be enabled per Distributed Port Group, and the need to enable Promiscuous Mode is removed. Unfortunately, this capability is not yet exposed in the vCenter graphical interface, making this solution difficult to track down, and a little tricky to implement, if you don’t regularly use the VMware PowerCLI tools to interact with the vCenter / vSphere deployment. This has been tested in our vSphere deployment, where each of our hosts has multiple uplink ports, across multiple physical switches. Once configured, the duplicate packet issue is resolved and spanning tree can be re-enabled on the Cisco virtual switch or virtual machine bridge.

References:

- https://docs.vmware.com/en/VMware-vSphere/7.0/com.vmware.vsphere.networking.doc/GUID-B15C6A13-797E-4BCB-B9D9-5CBC5A60C3A6.html

- https://twitter.com/_chrisjhart/status/1434181434776948736?lang=en

- https://williamlam.com/2018/04/native-mac-learning-in-vsphere-6-7-removes-the-need-for-promiscuous-mode-for-nested-esxi.html